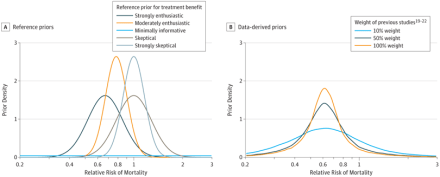

How can researchers maximize learning from experiments, especially from very expensive experiments such as clinical trials? This article shows how a Bayesian analysis of the data would have been much more informative, and likely would have saved a useful new technique for dealing with ARDS.

How can researchers maximize learning from experiments, especially from very expensive experiments such as clinical trials? This article shows how a Bayesian analysis of the data would have been much more informative, and likely would have saved a useful new technique for dealing with ARDS.

I am a big supporter of Bayesian methods, which will become even more important/useful with machine learning. But a colleague, Dr. Nick Eubank, pointed out that the data could also have been re-analyzed using frequentist statistics. The problem with the original analysis was not primarily that they used frequentist statistics. Rather, it was that they set a fixed (and rather large) threshold for defining success. This threshold was probably unattainable. But the clinical trial could still have been “saved,” even by conventional statistics.

Here is a draft of a letter to the editor on this subject. Apologies for the very academic tone – that’s what we do for academic journals!

The study analyzed in their article was shut down prematurely due to the unlikelihood that it would attain the target level of performance. Their paper shows that this might have been avoided, and the technique shown to have benefit, if their analysis had been performed before terminating the trial. A related analysis could usefully have been done within the frequentist statistical framework. According to their Table 2, a frequentist analysis (equivalent to an uninformative prior) would have suggested a 96% chance that the treatment was beneficial, and an 85% chance that it had RR < .9 .

The reason the original study appeared to be failing was not solely that it was analyzed with frequentist methods. It also failed because the target threshold for “success” was set at a high threshold, namely RR < .67. Thus, although the full Bayesian analysis of the article was more informative, even frequentist statistics can be useful to investigate the implications of different definitions of success.

Credit for this observation goes to Nick. I will ask him for permission to include one of his emails to me on this sub ject.

ject.

But although air is feasible, it does seem like blood is a better choice because it is likely to have stronger signals and lower noise. Air-based sensors would be non-invasive, so perhaps that is why some groups are pursuing air.

But although air is feasible, it does seem like blood is a better choice because it is likely to have stronger signals and lower noise. Air-based sensors would be non-invasive, so perhaps that is why some groups are pursuing air.